Unmanned Platforms: What's Working Today

Explore the current capabilities of unmanned platforms including static obstacle avoidance and edge object detection. Understand what's production-ready and what's still years away. Learn how to evaluate autonomy features for defense tech and share your insights with the community.

3/14/20261 min read

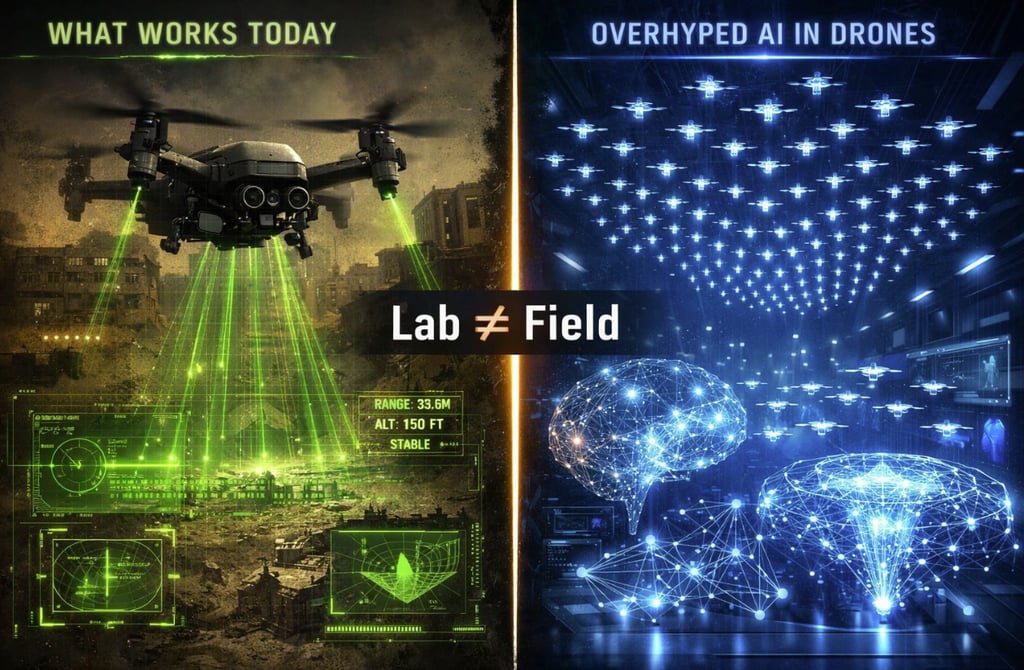

What is works today (Production-Ready) in unmanned platforms:

1. Static obstacle avoidance

• LiDAR + stereo vision detect trees, buildings, wires

• Works in known environments, good lighting

2. VIO position hold

• Camera + IMU + Barometer = stable hover without GPS

3. Pre-planned waypoint missions

• GNSS + IMU fusion, reliable in open terrain

4. Basic return-to-home

• Triggered by low battery/signal loss

5. Edge object detection

• INT8 models on Jetson/Snapdragon, 15-40 FPS (depending on the resolution)

What is overhyped / 2-5 years away:

1. Full autonomy in GNSS-denied, dynamic environments

• Lab demos ≠ real world (light changes, RF interference)

• Needs robust fusion + fallback logic, not just AI

2. End-to-end neural navigation

• Elegant idea, hard to certify/debug

• Hybrid (classical + ML) is more practical for defense

3. Swarm intelligence at scale

• 3-5 drones in lab ≠ 100+ in contested RF

• Latency, sync, failure handling still unsolved

4. Zero-shot generalization

• Simulation ≠ reality. Domain gap is real.

• Field data fine-tuning still mandatory

5. Explainable AI for safety-critical decisions

• Certification needs traceability. Black-box = adoption barrier

Quick evaluation framework:

Ask before adopting:

1. Tested in lab, controlled field, or real ops?

2. Graceful degradation when sensors fail?

3. Clear certification path?

4. Runs on embedded hardware?

5. How much field data was needed?

If any answer is "unclear" → treat as research, not product.

Key Insight:

Autonomy isn't binary. It's a spectrum. In defense, the goal isn't "no human" - it's "the right human input, at the right time".

Progress is real. Hype slows adoption of what actually works.

Related : GEOCOM Co. LLC www.geocomco.eu DeepTechRnD www.deeptechrnd.eu SpearXAgro www.spearxagro.eu

Contacts

SpearX ↑ Strike with Precision

+359 877 620 210

D-U-N-S® 525540791

© 2025 - 2026. All rights reserved. SpearX Projects. Geocom Co. LLC

SpearX is an EU-Ukrainian company located in a strategic areas for R&D in UAV