Advanced Guidance Systems for High-Speed Intercepts

Discover how modern guidance systems leverage computer vision and AI to adapt to varying target speeds and sensor spectra. Learn about the requirements for high-speed intercepts, including frame ra...

4/28/20261 min read

Designing guidance systems is not one-size-fits-all.

The architecture changes completely depending on target speed and sensor spectrum.

For standard targets (under 50-90 km/h like FPV drone), 30 FPS and 100-150 ms system latency are usually enough. Traditional tracking filters (Kalman or correlation-based) can recover from short target losses. The angular shift between frames is small, so prediction errors are easy to correct.

For high-speed intercepts (150-300+ km/h), the physics changes. The target moves several meters between frames.

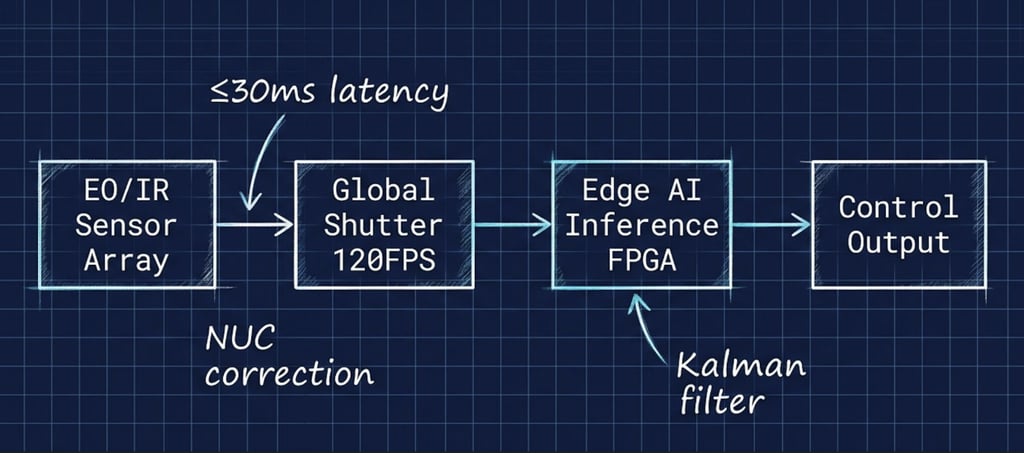

Here, you need 60-120 FPS, a global shutter to stop rolling-shutter distortion and motion blur, and total latency under 30-50 ms (sensor → preprocessing → AI inference → control output).

If the control loop is slower than the time the target needs to leave the effective field of view, hit probability drops sharply. Blur is not just a visual problem. It destroys edge detection, breaks standard trackers and forces the system to guess instead of measure.

This is where AI and computer vision become essential. Modern seekers do not just follow pixels. They use optimized neural networks to reconstruct blurred contours, predict non-linear maneuvers, classify threats in real time, and fuse data from multiple sensors. The real challenge is not model accuracy in a lab, but stable edge inference under strict power, thermal, and timing limits. Models must run deterministically on low-power SoCs while handling vibration, G-forces and fast lighting changes.

Sensor spectrum also changes everything. Visible (EO) cameras give high resolution and clear texture, making object classification easier. But they depend on daylight, weather, and are very sensitive to motion blur at high angular rates. Infrared (MWIR/LWIR) sensors see heat signatures day and night, through smoke or light obscurants.

However, IR has lower resolution, different noise patterns, and needs constant non-uniformity correction (NUC). AI trained on EO data will fail on IR without retraining, new datasets and hybrid fusion architectures that sync spatial and temporal data across bands.

Next-generation guidance systems win by design, not by specs alone. They combine frame rate, spectral response and AI inference into one tight control loop with predictable latency.

Related : GEOCOM Co. LLC www.geocomco.eu DeepTechRnD www.deeptechrnd.eu SpearXAgro www.spearxagro.eu

Contacts

SpearX ↑ Strike with Precision

+359 877 620 210

D-U-N-S® 525540791

© 2025 - 2026. All rights reserved. SpearX Projects. Geocom Co. LLC

SpearX is an EU-Ukrainian company located in a strategic areas for R&D in UAV